像素片段

在对像素变化建模时,先要对图像中的像素点在一段时间内如何变化有个概念。考虑行人穿过摄像机视野的场景。给定一段线段在给定帧数的图像中的变化,然后给这种波动建立模型。

首先我们需要对这条线段进行采样。线采样函数为 cvInitLineIterator()和CV_NEXT_LINE_POINT() 宏定义。

cvInitLineIterator()返回直线上迭代的点的个数。

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 |

//core_c.h /* Initializes line iterator. Initially, line_iterator->ptr will point to pt1 (or pt2, see left_to_right description) location in the image. Returns the number of pixels on the line between the ending points. */ CVAPI(int) cvInitLineIterator( const CvArr* image, CvPoint pt1, CvPoint pt2, CvLineIterator* line_iterator, int connectivity CV_DEFAULT(8), int left_to_right CV_DEFAULT(0)); /* Moves iterator to the next line point */ #define CV_NEXT_LINE_POINT( line_iterator ) \ { \ int _line_iterator_mask = (line_iterator).err < 0 ? -1 : 0; \ (line_iterator).err += (line_iterator).minus_delta + \ ((line_iterator).plus_delta & _line_iterator_mask); \ (line_iterator).ptr += (line_iterator).minus_step + \ ((line_iterator).plus_step & _line_iterator_mask); \ } |

每次调用CV_NEXT_LINE_POINT(line_iterator) 使line_iterator指向下一个像素。

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

/* Line iterator state: */ typedef struct CvLineIterator { /* Pointer to the current point: */ uchar* ptr; //指针 /* Bresenham algorithm state: */ int err; int plus_delta; int minus_delta; int plus_step; int minus_step; } CvLineIterator; |

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 |

int _tmain(int argc, _TCHAR* argv[]){ const char* avifile = "..\\avi\\walk.avi"; CvCapture *capture=cvCreateFileCapture(avifile); int max_buffer; IplImage* rawImage; int r[10000], g[10000], b[10000]; CvLineIterator iterator; CvPoint pt1=cvPoint(100,100); CvPoint pt2=cvPoint(100, 150); FILE* fptrb = fopen("blines.csv", "w"); FILE* fptrg = fopen("glines.csv", "w"); FILE* fptrr = fopen("rlines.csv", "w"); for (;;){ if (!cvGrabFrame(capture)) break; rawImage = cvRetrieveFrame(capture);//取回由函数cvGrabFrame抓取的图像 //cvShowImage("1", rawImage); //cvWaitKey(0); max_buffer = cvInitLineIterator(rawImage, pt1, pt2, &iterator, 8, 0); for (int j = 0; j < max_buffer; j++){ fprintf(fptrb, "%d,", iterator.ptr[0]); fprintf(fptrg, "%d,", iterator.ptr[1]); fprintf(fptrr, "%d,", iterator.ptr[2]); iterator.ptr[2] = 255; CV_NEXT_LINE_POINT(iterator); } //OUTPUT THE DATA IN ROWS fprintf(fptrb, "\r");//换行 不要写成"\n",编译器可能无法识别 fprintf(fptrg, "\r"); fprintf(fptrr, "\r"); } fclose(fptrb); fclose(fptrg); fclose(fptrr); cvReleaseCapture(&capture); //cvReleaseImage(&rawImage); return 0; } |

平均背景法

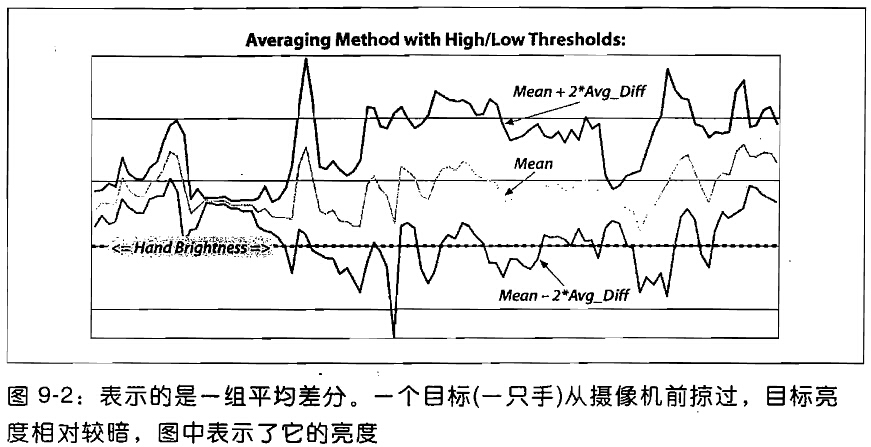

基本思路:计算每个像素的平均值和标准差作为它的背景模型。考虑行人检测的像素直线,可以通过视频中的平均值和平均差来描述每一个像素的变化,

2帧图像之间对应像素点灰度值算出一个误差值,在背景建模时间内算出该像素点的平均值,误差平均值,然后在平均差值的基础上+-误差平均值的常数(这个系数需要手动调整)倍作为背景图像的阈值范围,所以当进行前景检测时,当相应点位置来了一个像素时,如果来的这个像素的每个通道的灰度值都在这个阈值范围内,则认为是背景用0表示,否则认为是前景用255表示。

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 |

/////////////////////////////////////////////////////////////////////////////////////////////////////////////////// // Accumulate average and ~std (really absolute difference) image and use this to detect background and foreground // // Typical way of using this is to: // AllocateImages(); // //loop for N images to accumulate background differences // accumulateBackground(); // //When done, turn this into our avg and std model with high and low bounds // createModelsfromStats(); // //Then use the function to return background in a mask (255 == foreground, 0 == background) // backgroundDiff(IplImage *I,IplImage *Imask, int num); // //Then tune the high and low difference from average image background acceptance thresholds // float scalehigh,scalelow; //Set these, defaults are 7 and 6. Note: scalelow is how many average differences below average // scaleHigh(scalehigh); // scaleLow(scalelow); // //That is, change the scale high and low bounds for what should be background to make it work. // //Then continue detecting foreground in the mask image // backgroundDiff(IplImage *I,IplImage *Imask, int num); // //NOTES: num is camera number which varies from 0 ... NUM_CAMERAS - 1. Typically you only have one camera, but this routine allows // you to index many. // #ifndef AVGSEG_ #define AVGSEG_ #include "cv.h" // define all of the opencv classes etc. #include "highgui.h" #include "cxcore.h" //IMPORTANT DEFINES: #define NUM_CAMERAS 1 //This function can handle an array of cameras #define HIGH_SCALE_NUM 7.0 //How many average differences from average image on the high side == background #define LOW_SCALE_NUM 6.0 //How many average differences from average image on the low side == background void AllocateImages(IplImage *I); void DeallocateImages(); void accumulateBackground(IplImage *I, int number=0); void scaleHigh(float scale = HIGH_SCALE_NUM, int num = 0); void scaleLow(float scale = LOW_SCALE_NUM, int num = 0); void createModelsfromStats(); void backgroundDiff(IplImage *I,IplImage *Imask, int num = 0); #endif |

分配临时内存和完成背景建模后的内存释放

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 |

#include "ch9_AvgBackground.h" //GLOBAL Storage //Float,3-channel images IplImage *IavgF[NUM_CAMERAS],*IdiffF[NUM_CAMERAS], *IprevF[NUM_CAMERAS], *IhiF[NUM_CAMERAS], *IlowF[NUM_CAMERAS]; IplImage *Iscratch,*Iscratch2; //Float,1-channel image IplImage *Igray1,*Igray2,*Igray3 IplImage *Ilow1[NUM_CAMERAS],*Ilow2[NUM_CAMERAS],*Ilow3[NUM_CAMERAS],*Ihi1[NUM_CAMERAS],*Ihi2[NUM_CAMERAS],*Ihi3[NUM_CAMERAS]; //Byte,1-channel image IplImage *Imaskt; //Counts number of images learned for averaring later float Icount[NUM_CAMERAS]; void AllocateImages(IplImage *I) //I is just a sample for allocation purposes { for(int i = 0; i<NUM_CAMERAS; i++){ IavgF[i] = cvCreateImage( cvGetSize(I), IPL_DEPTH_32F, 3 ); IdiffF[i] = cvCreateImage( cvGetSize(I), IPL_DEPTH_32F, 3 ); IprevF[i] = cvCreateImage( cvGetSize(I), IPL_DEPTH_32F, 3 ); IhiF[i] = cvCreateImage( cvGetSize(I), IPL_DEPTH_32F, 3 ); IlowF[i] = cvCreateImage(cvGetSize(I), IPL_DEPTH_32F, 3 ); Ilow1[i] = cvCreateImage( cvGetSize(I), IPL_DEPTH_32F, 1 ); Ilow2[i] = cvCreateImage( cvGetSize(I), IPL_DEPTH_32F, 1 ); Ilow3[i] = cvCreateImage( cvGetSize(I), IPL_DEPTH_32F, 1 ); Ihi1[i] = cvCreateImage( cvGetSize(I), IPL_DEPTH_32F, 1 ); Ihi2[i] = cvCreateImage( cvGetSize(I), IPL_DEPTH_32F, 1 ); Ihi3[i] = cvCreateImage( cvGetSize(I), IPL_DEPTH_32F, 1 ); cvZero(IavgF[i] ); cvZero(IdiffF[i] ); cvZero(IprevF[i] ); cvZero(IhiF[i] ); cvZero(IlowF[i] ); Icount[i] = 0.00001; //Protect against divide by zero } Iscratch = cvCreateImage( cvGetSize(I), IPL_DEPTH_32F, 3 ); Iscratch2 = cvCreateImage( cvGetSize(I), IPL_DEPTH_32F, 3 ); Igray1 = cvCreateImage( cvGetSize(I), IPL_DEPTH_32F, 1 ); Igray2 = cvCreateImage( cvGetSize(I), IPL_DEPTH_32F, 1 ); Igray3 = cvCreateImage( cvGetSize(I), IPL_DEPTH_32F, 1 ); Imaskt = cvCreateImage( cvGetSize(I), IPL_DEPTH_8U, 1 ); cvZero(Iscratch); cvZero(Iscratch2 ); } void DeallocateImages() { for(int i=0; i<NUM_CAMERAS; i++){ cvReleaseImage(&IavgF[i]); cvReleaseImage(&IdiffF[i] ); cvReleaseImage(&IprevF[i] ); cvReleaseImage(&IhiF[i] ); cvReleaseImage(&IlowF[i] ); cvReleaseImage(&Ilow1[i] ); cvReleaseImage(&Ilow2[i] ); cvReleaseImage(&Ilow3[i] ); cvReleaseImage(&Ihi1[i] ); cvReleaseImage(&Ihi2[i] ); cvReleaseImage(&Ihi3[i] ); } cvReleaseImage(&Iscratch); cvReleaseImage(&Iscratch2); cvReleaseImage(&Igray1 ); cvReleaseImage(&Igray2 ); cvReleaseImage(&Igray3 ); cvReleaseImage(&Imaskt); } |

学习累积背景图像和每一帧图像插值的绝对值。

cvCvtScale函数将原始的每通道8位,3通道的彩色图像转换我float型的3通道图像。之后我们基类原始的浮点图像为IavgF,cvAbsDiff()计算每帧图像之间的绝对差图像,并将其累积为IdiffF。每次累积图像后,增加图像计数器Icount的值。该计数器是一个全局变量,用于接下来计算平均值。

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

// Accumulate the background statistics for one more frame // We accumulate the images, the image differences and the count of images for the // the routine createModelsfromStats() to work on after we're done accumulating N frames. // I Background image, 3 channel, 8u // number Camera number void accumulateBackground(IplImage *I, int number) { static int first = 1; //nb. Not thread safe cvCvtScale(I,Iscratch,1,0); //To float; if (!first){ cvAcc(Iscratch,IavgF[number]);//将帧叠加到累积器(accumulator)中 cvAbsDiff(Iscratch,IprevF[number],Iscratch2);// dst(x,y,c) = abs(src1(x,y,c) - src2(x,y,c)) cvAcc(Iscratch2,IdiffF[number]); Icount[number] += 1.0; } first = 0; cvCopy(Iscratch,IprevF[number]); } |

计算每一个像素的均值和方差观测(平均绝对差分)

平均绝对差分:△=(│△1│+│△2│+……+│△n│)/n (△为平均绝对误差;△1、△2、……△n为各次测量的绝对误差)。所有单个观测值与算术平均值的偏差的绝对值的平均。与平均误差相比,平均绝对误差由于离差被绝对值化,不会出现正负相抵消的情况,因而,平均绝对误差能更好地反映预测值误差的实际情况。

一阶距是总体平均数。如何衡量样本之间的离差呢,假设用平均差的概念,也就是E|X-EX|难以计算,而E(x-EX)^2则相对好计算得多。

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 |

//Once you've learned the background long enough, turn it into a background model void createModelsfromStats(){ for(int i=0; i<NUM_CAMERAS; i++){ cvConvertScale(IavgF[i],IavgF[i],(double)(1.0/Icount[i])); cvConvertScale(IdiffF[i],IdiffF[i],(double)(1.0/Icount[i])); cvAddS(IdiffF[i],cvScalar(1.0,1.0,1.0),IdiffF[i]); //Make sure diff is always something 确保平均差分图像的值最小是1 scaleHigh(HIGH_SCALE_NUM,i); scaleLow(LOW_SCALE_NUM,i); } } // Scale the average difference from the average image high acceptance threshold void scaleHigh(float scale, int num){ cvConvertScale(IdiffF[num],Iscratch,scale); //Converts with rounding and saturation cvAdd(Iscratch,IavgF[num],IhiF[num]); cvCvtPixToPlane( IhiF[num], Ihi1[num],Ihi2[num],Ihi3[num], 0 ); } // Scale the average difference from the average image low acceptance threshold void scaleLow(float scale, int num){ cvConvertScale(IdiffF[num],Iscratch,scale); //Converts with rounding and saturation cvSub(IavgF[num],Iscratch,IlowF[num]); cvCvtPixToPlane( IlowF[num], Ilow1[num],Ilow2[num],Ilow3[num], 0 ); } |

SetHighThreshold(7.0)使得对于每一帧图像的绝对差大于平均值7倍的像素都认为是前景;SetLowThreshold(6.0)使得对于每一帧图像的绝对差小于平均值6倍的像素都认为是前景;像素平均值范围内,认为目标是背景。

|

1 2 3 |

#define cvCvtPixToPlane cvSplit void cvSplit( const CvArr* src, CvArr* dst0, CvArr* dst1,CvArr* dst2, CvArr* dst3 ); 作用是:分割多通道数组成几个单通道数组或者从数组中提取一个通道 |

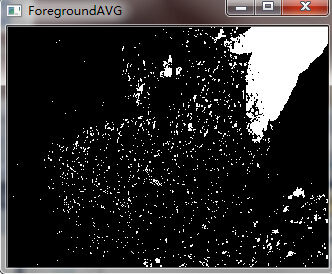

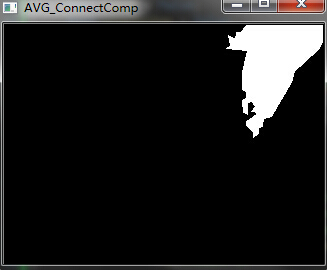

一旦有了自己的背景模型,同时给出高低阈值,可用它将图像分割前景(不能被背景模型”解释”的图像部分)和背景(在背景模型中,任何在高低阈值之间的图像部分)

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

// Create a binary: 0,255 mask where 255 means forground pixel // I Input image, 3 channel, 8u // Imask mask image to be created, 1 channel 8u // num camera number. // void backgroundDiff(IplImage *I,IplImage *Imask, int num) //Mask should be grayscale { cvCvtScale(I,Iscratch,1,0); //To float; //Channel 1 cvCvtPixToPlane( Iscratch, Igray1,Igray2,Igray3, 0 ); cvInRange(Igray1,Ilow1[num],Ihi1[num],Imask); //Channel 2 cvInRange(Igray2,Ilow2[num],Ihi2[num],Imaskt); cvOr(Imask,Imaskt,Imask); //Channel 3 cvInRange(Igray3,Ilow3[num],Ihi3[num],Imaskt); cvOr(Imask,Imaskt,Imask); //Finally, invert the results cvSubRS( Imask, cvScalar(255), Imask); } |

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 |

#include "ch9_AvgBackground.h" #include <cstdlib> #include <stdio.h> #include <iostream> using namespace std; int _tmain(int argc, _TCHAR* argv[]){ IplImage* rawImage = 0; IplImage *ImaskAVG = 0, *ImaskAVGCC = 0; float scalehigh = HIGH_SCALE_NUM;//默认值为6 float scalelow = LOW_SCALE_NUM;//默认值为7 int startcapture = 1; int endcapture = 30; const char* avifile = "..\\avi\\tree.avi"; CvCapture *capture=cvCreateFileCapture(avifile); //MAIN PROCESSING LOOP: bool pause = false; bool singlestep = false; if (capture){ cvNamedWindow("Raw", 1);//原始视频图像 cvNamedWindow("AVG_ConnectComp", 1);//平均法连通区域分析后的图像 cvNamedWindow("ForegroundAVG", 1);//平均法后图像 int i = -1; for (;;){ if( !cvGrabFrame( capture )) break; rawImage = cvRetrieveFrame( capture ); ++i;//count it if (!rawImage) break; //First time: if (0 == i) { printf("\n . . . wait for it . . .\n"); //Just in case you wonder why the image is white at first //AVG METHOD ALLOCATION AllocateImages(rawImage);//为算法的使用分配内存 scaleHigh(scalehigh);//设定背景建模时的高阈值函数 scaleLow(scalelow);//设定背景建模时的低阈值函数 ImaskAVG = cvCreateImage(cvGetSize(rawImage), IPL_DEPTH_8U, 1); ImaskAVGCC = cvCreateImage(cvGetSize(rawImage), IPL_DEPTH_8U, 1); cvSet(ImaskAVG, cvScalar(255)); } //If we've got an rawImage and are good to go: if (rawImage) { //This is where we build our background model // if (!pause && i >= startcapture && i < endcapture){ if (i >= startcapture && i < endcapture){ //LEARNING THE AVERAGE AND AVG DIFF BACKGROUND accumulateBackground(rawImage);//平均法累加过程 } //When done, create the background model if (i == endcapture){ createModelsfromStats();//平均法建模过程 } //Find the foreground if any if (i >= endcapture) {//endcapture帧后开始检测前景 //FIND FOREGROUND BY AVG METHOD: backgroundDiff(rawImage, ImaskAVG); cvCopy(ImaskAVG, ImaskAVGCC); cvconnectedComponents(ImaskAVGCC, 1, 4.0);//平均法中的前景清除 //FIND FOREGROUND BY CODEBOOK METHOD //Display cvShowImage("Raw", rawImage);//除了这张是彩色图外,另外4张都是黑白图 cvWaitKey(0); cvShowImage("AVG_ConnectComp", ImaskAVGCC); cvWaitKey(0); cvShowImage("ForegroundAVG", ImaskAVG); cvWaitKey(0); } } } } return 0; } |